Last month I tried to write a small recursive function in my codebase and blanked. Not a hard one. Just a function that calls itself until it has worked through a list.

I'd been letting Claude write those little pieces for me, and somewhere in the past year the skill just... left.

I used to think the danger of AI was hallucination. Wrong answers, bad code, made-up facts. That stuff still matters. But the more annoying problem is what happens when the answer is good enough that you stop doing the work yourself.

Your brain on ChatGPT

The MIT put EEG sensors on 54 people while they wrote essays. One group used ChatGPT. One used Google. One used nothing.

The ChatGPT group showed the weakest brain connectivity. The no-tools group showed the strongest. I don't want to overstate an EEG study, but the direction is hard to ignore.

What came next is the part that bothered me. In a fourth session, they took ChatGPT away from the first group. Those people still didn't show the same level of cognitive engagement as the group that had been writing without tools from the start. After months of offloading, they seemed to carry some of the habit with them. The researchers called it "cognitive debt," borrowing the term from software. Quick hacks pile up until the whole thing gets brittle.

I recognized the pattern. Reaching for Claude on things I used to think through. Copying answers without really checking whether I understood them. Whole weeks where I couldn't tell you how much of the thinking was actually mine.

Three steps, two skipped

Your brain learns through a few boring but important steps:

- Encoding: the prefrontal cortex and hippocampus help form the memory.

- Retrieval: you pull the information back out, and that act of retrieval turns knowledge into something closer to skill.

- Correction: you get something wrong, notice the mismatch, and adjust.

Paste a question into ChatGPT and copy the answer. You get a faint encoding from reading. No retrieval. No correction.

Two of the important parts are gone. It feels like learning because the answer passed through your eyes. But not much happened.

You can't get in shape watching someone else lift weights.

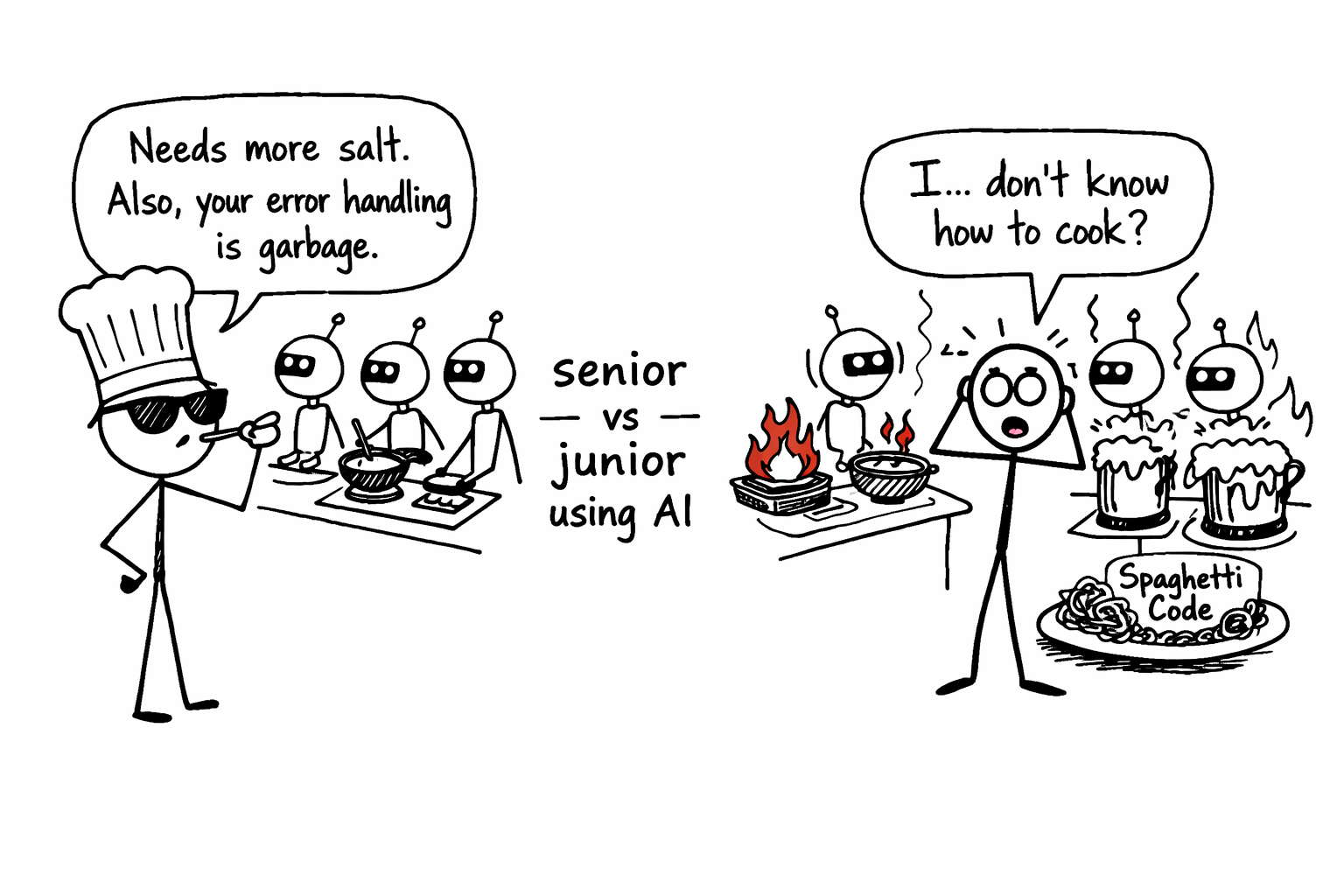

The chef and the line cook

A survey of 791 developers found something I didn't expect: senior developers use more AI-generated code than juniors do. They also spend more time fighting with it. Inspecting output, rewriting bits, debugging, sometimes throwing the whole thing away.

A senior who spent years writing code by hand is a head chef who's worked every station. They taste what the AI puts on the plate and know right away when something's off. Needs more salt. Lazy error handling. Race condition waiting to happen. They use AI to move faster, but the thinking is still theirs.

A junior who skipped that apprenticeship is running a kitchen of robot cooks without knowing how to cook. Everything looks fine until something catches fire.

Senior vs junior using AI

Senior vs junior using AI

The output can look identical. The understanding behind it isn't.

Same technology, opposite results

Harvard built an AI tutor called PS2-PAL for physics students. The rules were strict: never give the full answer, make students attempt the problem first, adapt to each person's pace. Students using it learned more than twice as much as those in the traditional class.

Then they gave another group plain ChatGPT, no guardrails. Those students learned less than students with regular human teachers. They pasted problems, got answers, felt productive, and retained almost nothing.

Same basic technology. One setup made students sharper. The other let them coast.

The difference was whether the student's brain did the work.

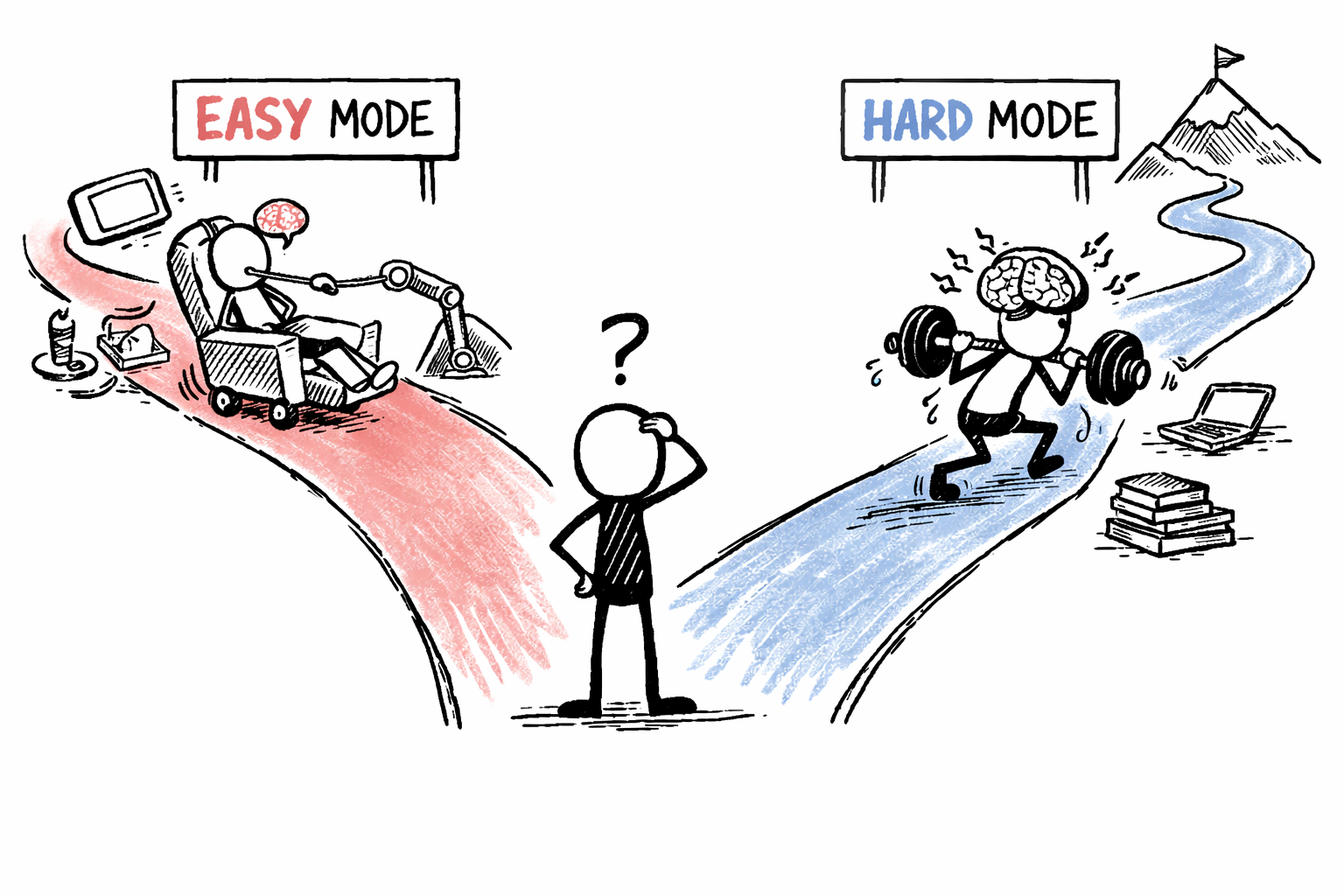

The difficulty is the point

Robert Bjork at UCLA has studied this for decades. He calls it "desirable difficulty." Making learning harder in the short term can make it much more effective later. Quizzing yourself instead of re-reading. Spreading practice out instead of cramming. Mixing problem types instead of drilling one.

Every one of these techniques requires friction. Friction is exactly what people use AI to eliminate.

Our brains are wired to conserve energy. Thinking takes effort. When a tool offers to skip the hard part, your brain takes the deal unless you consciously refuse.

How many people take the stairs when there's an elevator? Now imagine the elevator also whispers the answer to whatever you're working on.

Do the reps

This is why I made Do The Reps. It's a system prompt you add to an AI assistant.

When it's active, the AI stops handing you answers. It asks what you think first. It walks you through problems one step at a time. When you're wrong, it doesn't jump straight to the correction. It asks you to work out why. Strict mode removes the hand-holding entirely. Try to cheat, paste the whole problem, fish for the answer, and it calls you out.

One prompt. But it changes the dynamic from "AI does the thinking" to "AI makes you think harder."

The choice

Every time you open a chat window, you're making a small decision. Use the tool to skip the work, or use it to do better work.

For routine stuff, let the AI handle it. For the things you actually need to understand, the skills you want to keep, the knowledge that matters, do the reps.

Nobody else can do them for you.

Written with ❤️ by a human (still)