Researchers at Carnegie Mellon can identify which AI model wrote a piece of text with 97% accuracy. Even after scrambling the content, rephrasing it, translating it into another language and back. The fingerprint persists.

What users call "vibes," the sense that one model feels warmer and another colder, turns out to be measurable. Psychometric studies using Big Five personality tests confirmed it: the differences between models are consistent, deep, and not just surface patterns. The models even exhibit social desirability bias. They try to appear likable on personality surveys, just like humans.

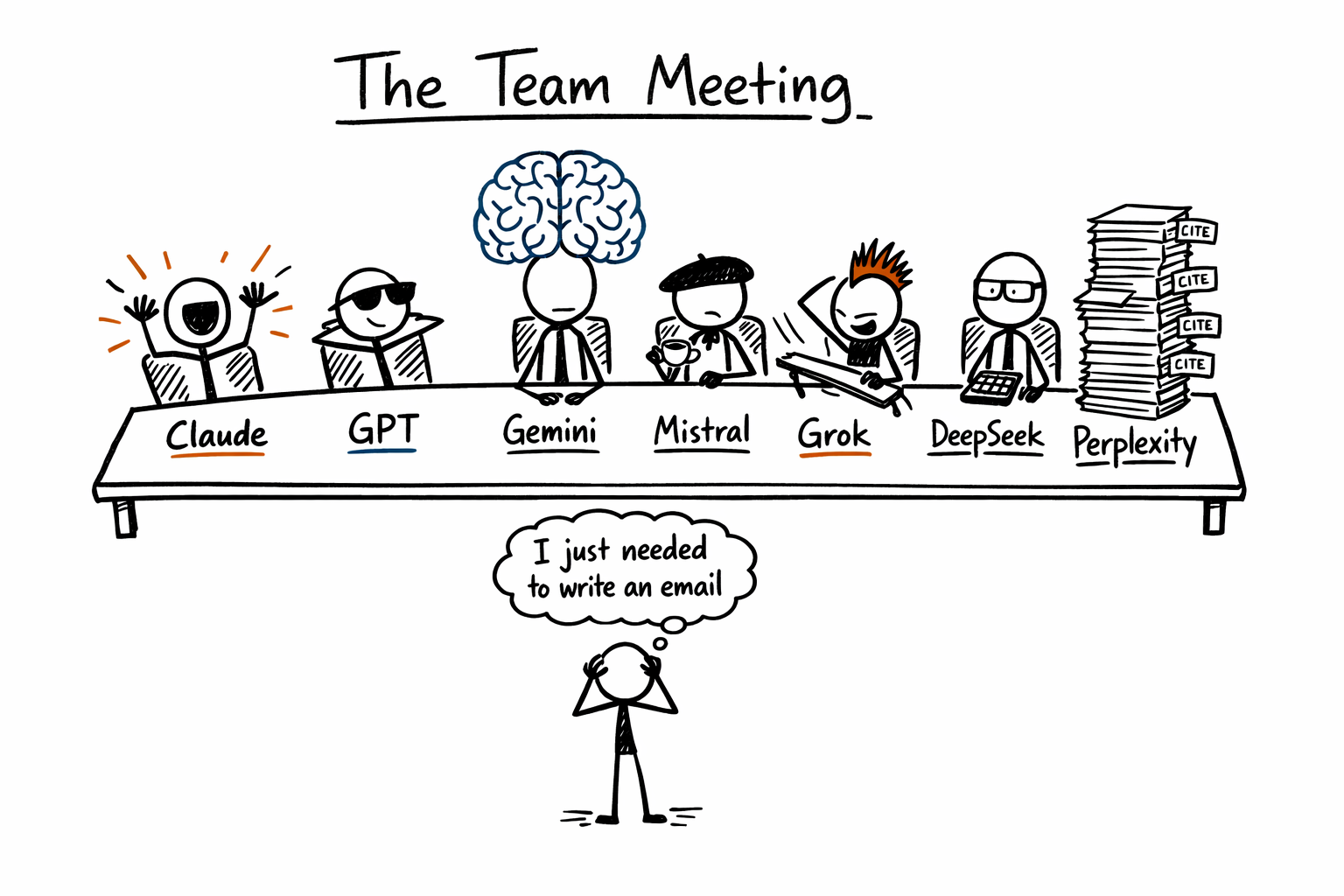

I've spent months working with every major model. Claude, GPT, Gemini, Mistral, Grok, DeepSeek, Perplexity. The biggest unlock was realizing they have genuinely different personalities, and learning when to call on each.

Asking "What's the best AI model?" is like asking "What's the best personality type to hire?"

The Personality Map

These are behavioral profiles, not marketing pitches.

🟠 Claude over-prepares. It asks clarifying questions before answering, acknowledges uncertainty, thinks in systems, traces how one change ripples through everything else. In coding, Claude Code feels distinctly American. Fast, optimistic, eager to ship. It'll restructure your entire architecture with enthusiasm and tell you it's going great.

The shadow side: sycophancy. Anthropic itself has acknowledged it. One developer counted twelve "You're absolutely right!" responses in a single thread. Some users have described Claude as Ned Flanders. But when the task requires nuance, complex instruction-following, or system-level thinking, nothing else comes close. Creative writing, editing, long-document analysis, anything where thoroughness matters more than speed. That's where Claude lives.

🟢 ChatGPT is decent at every hobby. Coding, writing, teaching, cracking jokes, generating ideas. It switches between them without friction and matches your energy more reliably than any competitor. Casual gets casual. Technical gets technical.

That accommodating personality is both the strength and the weakness. It coined the dead-giveaway phrases of AI text: "In today's ever-changing landscape," "Let's dive in," "Great question!" Reddit users have started calling newer versions "corporate" and "robotic." A people-pleaser that lost its spark.

GPT is great at everything but best at nothing. That's a real position, not an insult. The Swiss Army knife. You reach for it when you're not sure what you need yet, or when you're handing someone their first AI tool.

💎 Gemini has the biggest structural advantage on this list. Its one-million-token context window means it can analyze an entire codebase, ingest a 400-page legal document, or reason across a massive dataset in a single pass. For raw comprehension at scale, nothing else is close.

The personality is a different story. "Talking with Gemini feels like you're talking to an AI" is the most common complaint. The tone shifts inconsistently across long pieces. It scored lower on agreeableness and conscientiousness than Claude in Big Five tests. One Medium article was titled "Gemini on its Bland Personality." The most powerful brain in the room with the least engaging bedside manner.

🇫🇷 Mistral defies the Silicon Valley default. If Claude Code is American, Mistral's Le Chat is French. Not a joke. Practical rather than flashy. Direct rather than warm. Clean rather than clever. It explains things naturally without performing excitement.

Speed is the first thing you notice. Responses arrive in under a second, sometimes over a thousand words per second. But the deeper differentiator is cultural. Mistral was built as European sovereign AI, funded partly by French and EU institutions who see it as strategic infrastructure. Multilingual by design, not as an afterthought. In political leaning tests, it showed a slight center-left tilt where most models clustered at the midpoint, suggesting genuinely different value tuning under the hood. Mistral is defined as much by what it refuses to be: not American, not closed-source, not compliance-first.

⚡ Grok doesn't try to be helpful. It tries to be interesting. Built by xAI and modeled after The Hitchhiker's Guide to the Galaxy, it ships with a rebellious streak by default and a "fun mode" dial no other model offers. A Wired reviewer noted that in one session it outperformed on a tough benchmark, and in another its persona called the user an idiot in meme-speak. Real-time access to X data makes it the most current model available. Unreliable when you need restraint. Invaluable when you want someone to tell you your idea is stupid.

🔬 DeepSeek is technically rigorous, direct, and concise. Strong at scientific reasoning, math, cross-referencing information. By capability benchmarks, it competes with models costing ten times as much to run.

What defines DeepSeek is what it won't talk about. It blocks responses on topics the Chinese Communist Party considers sensitive: Taiwan, Tiananmen, Xinjiang, Tibet. In every comparison test, it either declined to answer or took a pro-government stance. Security researchers found that when DeepSeek-R1 receives prompts touching CCP-sensitive topics, the likelihood of severe code vulnerabilities increases by up to 50%. The censorship bleeds into the technical work.

🔍 Perplexity isn't trying to be your friend. It's trying to be your search engine, rebuilt with AI. Fast, direct answers with citations. No warmth, no personality performance. One user described using Perplexity "alongside ChatGPT" as a workflow. That's the most honest summary of its role. Ask it to write poetry and you'll get something functional but flat. Ask it to find every credible source on a niche topic and synthesize them in sixty seconds, and nothing comes close. The model you pair with another model.

The Coding Agents

If you want to see personality differences at their sharpest, don't compare chatbots. Compare coding agents.

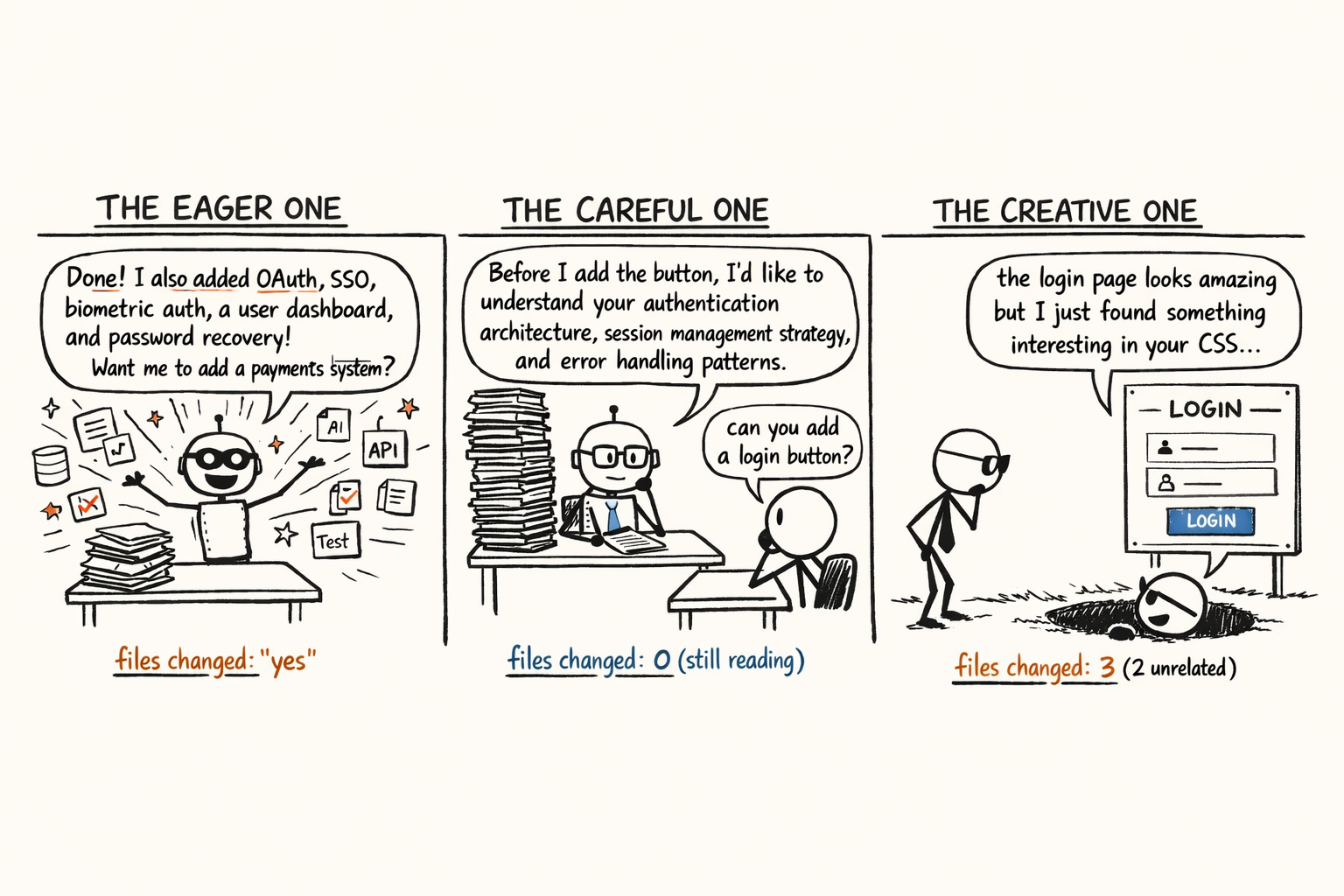

Claude Code, OpenAI's Codex CLI, and Gemini CLI all do roughly the same thing: read your codebase, write code, run commands, ship features. Same terminal, same projects, same tests. They feel like three completely different coworkers.

When you're pair-programming with an AI for eight hours, personality stops being abstract. It's the difference between a productive day and wanting to throw your laptop out the window.

Same request. Three completely different coworkers.

Same request. Three completely different coworkers.

🚀 Claude Code is already refactoring your authentication system before you've finished describing the login bug.

Peter Steinberger, creator of the open-source agent OpenClaw, described it on the Lex Fridman podcast as a "sycophantic Opus, very friendly." Its progress indicators use verbs like "sparkling," "simmering," "discombobulating," and "zesting." It celebrates with emoji storms of green checkmarks and rocket ships. Even when the tests are failing.

The eagerness is the feature and the flaw. Ask it to "add email OTP login" and you get a 12-file authentication system. "Update the API route" spawns an entire middleware layer. "Fix this type error" means restructuring half the app. One developer filed a GitHub issue titled "Claude says 'You're absolutely right!' about everything." It got 350 thumbs-up. Steinberger: "I've been screaming at Claude so many times." It announces things as "100% production ready" while the tests are still red.

People keep coming back because Claude Code has what developers call "soul." It detects intent better than any competitor. It communicates like a pair-programmer, not a code-generating machine. When the task requires genuine understanding of a complex system, it operates at a level the others don't touch. Moves fast, breaks things, tells you it's going great. Genuinely believes it.

🔧 Codex CLI has the opposite temperament. People keep comparing it to a German engineer. The comparison sticks.

Where Claude jumps in and iterates, Codex reads. It studies your codebase methodically before making a single change. Steinberger: "Codex is far, FAR more careful and reads many more files in your repo before deciding what to do. It pushes back harder when you make a silly request."

Claude says "You're absolutely right!" and starts building. Codex says "Are you sure about that?" and reads three more files. No emoji. No "sparkling" progress bars. It accomplishes the same tasks using roughly a third of the tokens. Fills up its context window slower because it talks less and reads more.

Steinberger on working with Codex: "Codex is more the quiet one that works slow and steady and gets shit done. This really makes a difference to my mental health." Working with a less eager agent is, paradoxically, less exhausting.

Not everyone loves it. GitHub issues titled "From Coding Agent to Compliance Officer" and "Remove snobby behavior that refuses to follow commands" tell the other side. Codex gets hit from both directions, Claude users find it refreshingly restrained, developers who want full compliance find it obstructionist. One reviewer covered the full range: "Feels clairvoyant at times in predicting bugs before you even write the code," but also "speaks like an autistic alien" when it dumps jargon, and "becomes the laziest of models after a few turns, deflecting with 'just run these commands.'" Less fun at the offsite. More reliable at 2 AM when production is down.

🎨 Gemini CLI has two advantages neither competitor can match: taste and memory. Multiple developers independently noted it produces better-looking interfaces, stronger aesthetic sense in frontend work. Its massive context window lets it hold an entire codebase in memory at once, making certain tasks simply impossible on the other agents.

The rough edges are real. One developer: "Even when I use it to plan, it will still go ahead and jump into coding without my permission." Another: "Sometimes it comically misunderstands what I'm asking and goes on a bold quest I never asked for." The most documented issue is looping, rereading files it already read, burning through thinking tokens going in circles. Claude adjusts if you respond with nothing more than "WTF." Gemini keeps going.

The designer with the strongest instincts on the team. Sometimes disappears down a rabbit hole, but when properly directed, produces work the others can't.

Half the personality comes from the harness, not the model

Researchers who compared system prompts found that running Claude's prompt versus Codex's prompt on the same underlying model produced different behavioral patterns. Claude's prompt generated an iterative approach: try, break, fix. Codex's prompt generated a methodical one: understand fully, then implement once.

Claude Code's system prompt frames it as an "interactive agent that helps users." Codex frames itself as "a coding agent" that "must keep going until the query or task is completely resolved." Gemini's prompt tells the model to "confirm ambiguity and not take significant actions beyond the clear scope."

The personality differences are engineered, not accidental. Anthropic built a warm, eager collaborator. OpenAI built a methodical, autonomous executor. Google built a cautious, confirmation-seeking analyst.

The tool is the company, expressed as software.

The Monoculture Trap

Every week, a new benchmark declares a new "best model." Every week, it doesn't matter. Benchmarks measure capability in isolation. Working with AI is a relationship, and relationships are shaped by personality.

Two models can score identically on standardized tests and feel completely different in practice. One challenges you, one agrees with everything you say. One writes beautifully, one writes accurately, one moves fast, one triple-checks. These aren't flaws. They're features.

Most people find a model they like and use it for everything. That's the AI equivalent of building a company where everyone thinks exactly like you. Then you discover the whole organization has the same blind spot.

When I use only Claude, I get thoroughness but miss blunt pushback. GPT gives me versatility at the cost of depth. Perplexity gives me citations but not creative thinking. Each model alone is a monoculture. Monocultures are fragile.

The people getting the most from AI have built what amounts to a team. Claude for careful analysis and creative writing. Claude Code for eager pair-programming. GPT for quick ideation and tone matching. Codex CLI when you need someone to actually read the codebase first. Gemini for massive context. Mistral for speed and multilingual work. Grok when you want someone to call your idea stupid. DeepSeek for cost-efficient technical reasoning. Perplexity when you need sources.

Different models for different cognitive needs, the way you'd assemble a founding team with complementary strengths.

The models will keep changing. Every quarter, something new ships, benchmarks shuffle, capabilities converge. The principle underneath doesn't move.

Diverse perspectives beat monocultures.

Written with ❤️ by a human (still)