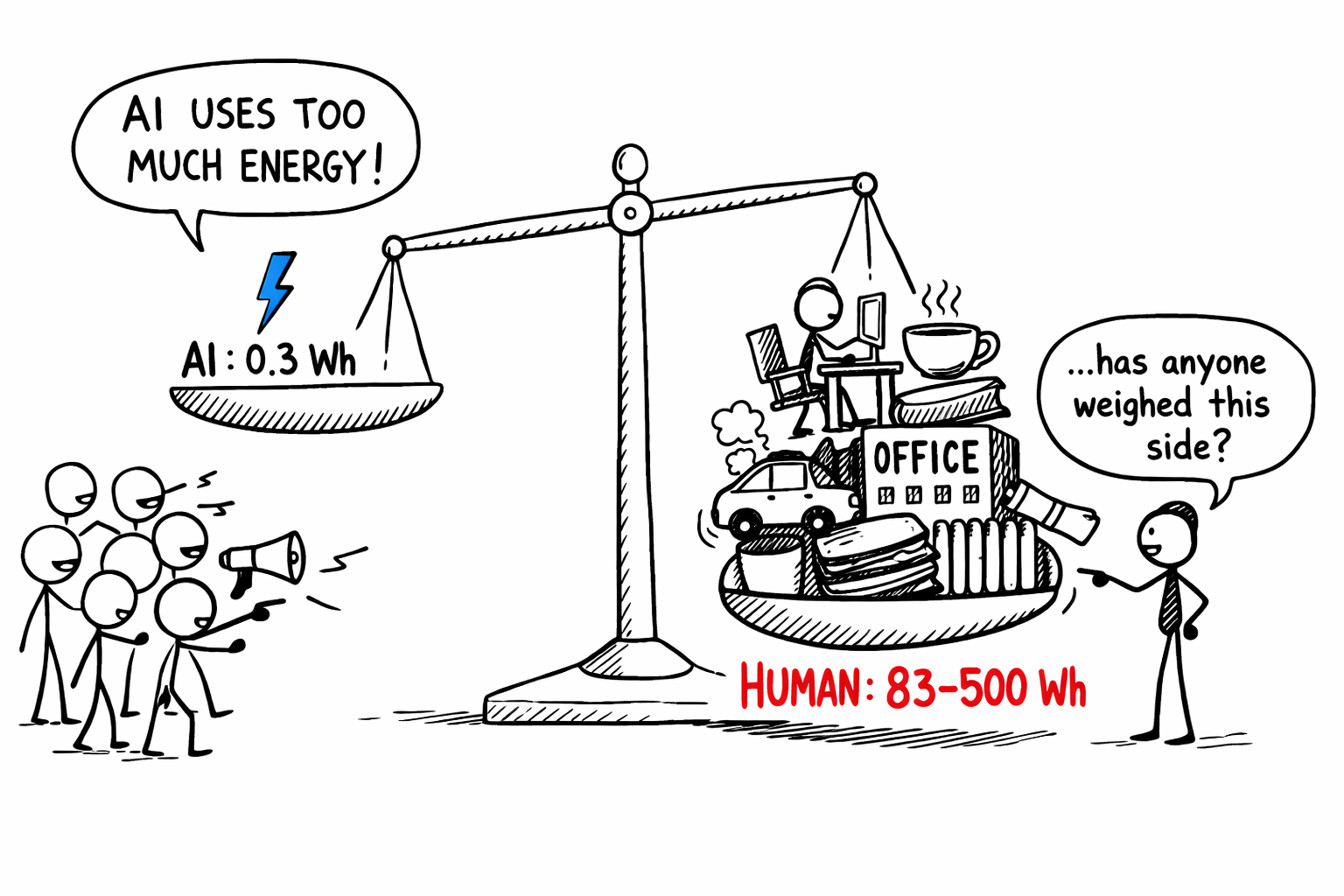

A ChatGPT query uses about 0.3 watt-hours of electricity. Google's Gemini, 0.24 Wh. That's the energy of running a microwave for one second, or streaming video for ten.

Twelve months ago, the best estimate was 9 Wh per query. The number dropped 33x in a single year. It will keep dropping.

But here's what never makes the headline: the alternative to AI answering your question isn't silence. It's a person answering your question. And that person wasn't born knowing the answer.

They were trained, for years, at enormous energetic cost. Then they sat in a heated office, commuted in a car, ate lunch, and spent twenty minutes thinking before giving you a response.

So what happens when you actually run the numbers on both sides?

The per-question gap

A knowledge worker at their desk doesn't run on brainpower alone. The brain draws about 20 watts. The full body, roughly 100. Add a computer and monitor at 150W, their share of office lighting, heating, and AC at another 300W, plus an amortized commute. All in: 500 to 1,000 watts.

The kind of question AI handles in 10 seconds takes a human 10 to 30 minutes.

500 watts for 10 minutes is 83 Wh. At the high end, 1,000W for 30 minutes is 500 Wh.

AI's 0.3 Wh versus a human's 83 to 500 Wh. That's a 100x to 1,000x difference. Per question.

A peer-reviewed study in Scientific Reports confirmed this at scale: AI emits 130 to 1,500 times less CO₂ per page of text than human writers, and 310 to 2,900 times less per image than illustrators.

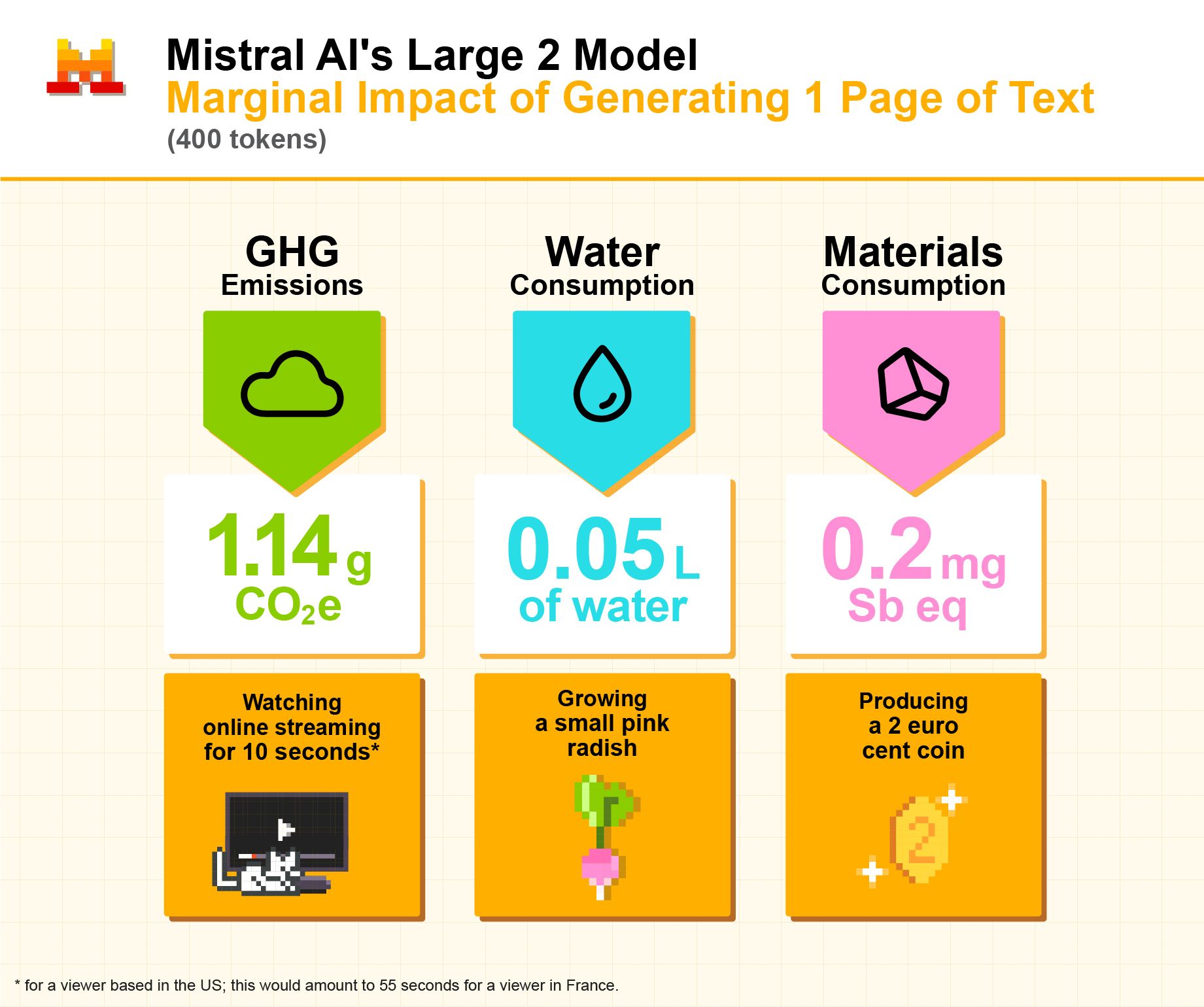

Mistral published their own lifecycle analysis for generating one page of text with their Large 2 model. The total footprint: 1.14 grams of CO₂, 0.05 liters of water, and 0.2 milligrams of antimony-equivalent in materials. The CO₂ is equivalent to watching online streaming for ten seconds. The water, to growing a small radish.

Mistral's lifecycle analysis for generating one page of text with their Large 2 model

Mistral's lifecycle analysis for generating one page of text with their Large 2 model

Hannah Ritchie ran the individual math. If you ask an AI ten questions a day, every day, for an entire year, it adds about 0.03% to your daily electricity use. Even at a hundred queries a day, it's 0.3%. Your refrigerator uses more energy keeping your leftovers cold.

Training costs the same. Output doesn't.

Training GPT-4 took an estimated 50,000 to 62,000 MWh. Enough to power 5,000 homes for a year. Those are the numbers that make headlines.

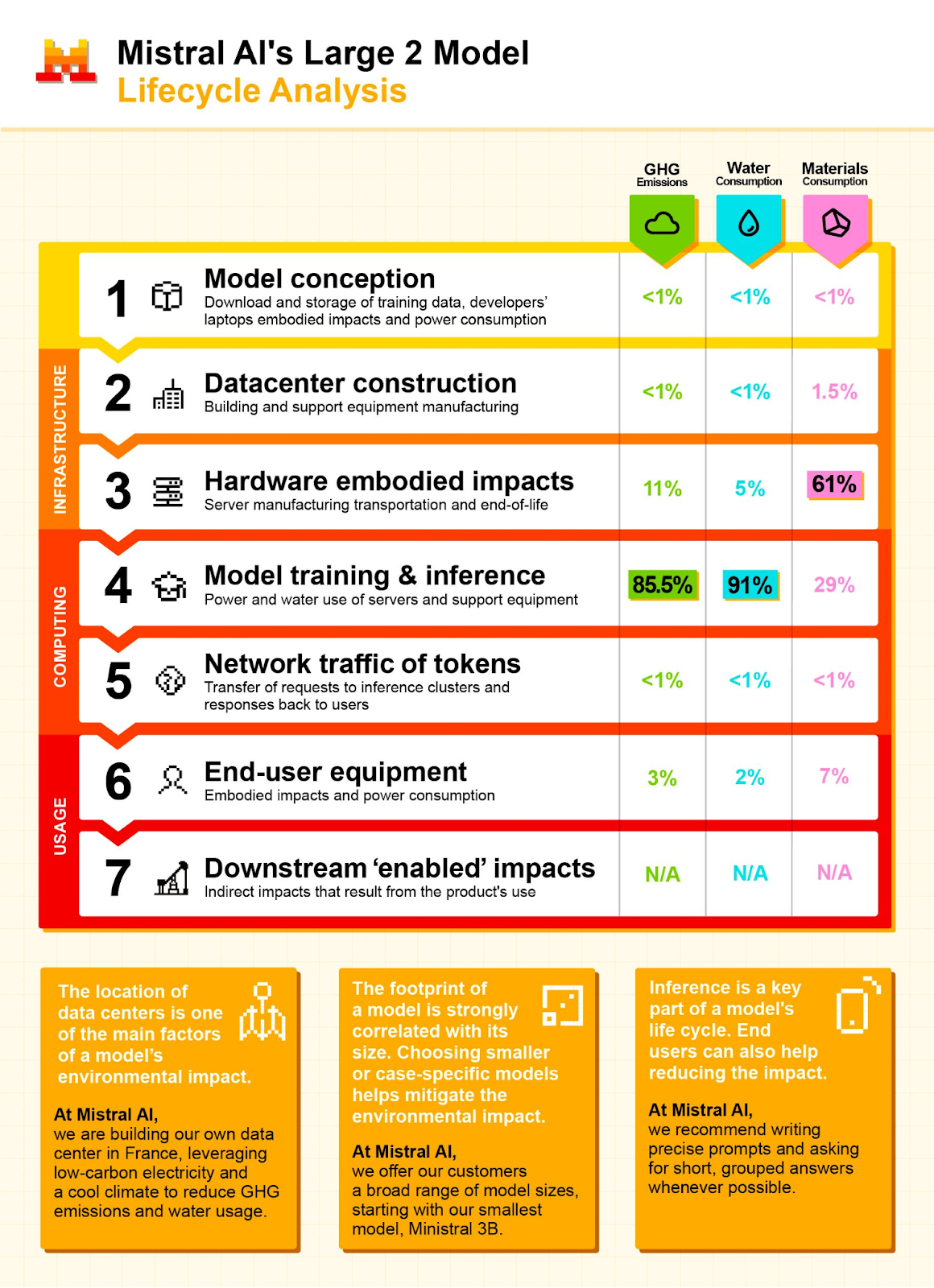

Mistral's lifecycle analysis of their Large 2 model shows where that energy actually goes. Training and inference account for 85.5% of total greenhouse gas emissions. Everything else, data storage, hardware manufacturing, network traffic, end-user devices, is rounding error by comparison.

Mistral AI's lifecycle analysis of their Large 2 model — training and inference dominate the energy footprint

Mistral AI's lifecycle analysis of their Large 2 model — training and inference dominate the energy footprint

Nobody runs the same calculation on a human.

The brain runs at 20 watts around the clock. The body at 80 to 100 watts continuously. Over 16 years of formal education, just the biological baseline adds up to over 11,000 kWh. Factor in food production, classroom heating, school buses, computers, textbooks, and a conservative estimate lands at 50,000 to 200,000 kWh per expert. That's 50 to 200 MWh.

Similar ballpark.

The difference is what happens after. GPT-4's training produced a model serving over a billion queries per day. Sixteen years of human training produced one expert handling one conversation at a time, eight hours a day, five days a week.

Want more AI capacity? Spin up servers. Takes hours. Want another human expert? Start from birth. Another twenty-plus years.

The real concern: absolute consumption will increase

None of this means AI's total energy footprint is small. It means the per-unit cost is small. And that's exactly the problem.

When the unit cost of a resource drops, total consumption tends to increase. Economists call this the Jevons Paradox. If AI makes intelligence 1,000 times cheaper, we won't replace existing queries one-for-one. We'll invent entirely new categories of demand: autonomous agents running 24/7, personalized tutoring for every student, real-time translation of every conversation.

This is already happening. OpenAI went from zero to over a billion queries per day in two years. The IEA projects data center demand could double to 945 TWh by 2030, equivalent to Japan's entire current electricity consumption.

But this pattern isn't new. Cars were more efficient than horses, so we built suburbs and drove 100 times more. The internet beat postal mail, so we sent a trillion times more messages. Nobody argues we should have stuck with horses and stamps.

Total energy consumption will increase. That's not a maybe, it's a certainty. The question is what we're getting for that energy.

Everyone's having the wrong argument about AI and energy. They're asking "does AI use a lot of electricity?" Of course it does. Training frontier models takes enormous power, and serving billions of queries adds up.

But the implicit assumption, that the alternative is somehow free, is wrong. Humans are extraordinarily expensive to train and operate when you actually count the energy.

The question was never "how much?" It was always "compared to what?"

Sources & Deep Dive

AI energy per query:

- Epoch AI (Feb 2025). "How Much Energy Does ChatGPT Use?" Estimated ~0.3 Wh per GPT-4o query. Notes that major chatbots from OpenAI, Anthropic, and Google are "roughly comparable" in energy costs. [epoch.ai/gradient-updates]

- OpenAI CEO Sam Altman confirmed 0.34 Wh per query (2025).

- Google (Aug 2025). Reported median Gemini prompt at 0.24 Wh, with energy per query dropping 33x over the prior year. Via MIT Technology Review.

- Jegham et al. (2025). "How Hungry is AI?" Benchmarking study ranking Claude 3.7 Sonnet as the most eco-efficient frontier model tested (score: 0.886). [arxiv.org/html/2505.09598v1]

AI training energy:

- Patterson, D. et al. (2021). "Carbon Emissions and Large Neural Network Training." Google & UC Berkeley. GPT-3 training: ~1,287 MWh.

- Ludvigsen, K.G.A. (2024). "The Carbon Footprint of GPT-4." Towards Data Science. Estimated 50,000–62,000 MWh based on ~25,000 A100 GPUs running 90–100 days.

- Epoch AI estimates Claude 3.5 Sonnet at ~400B parameters, suggesting comparable training scale to GPT-4o.

Human brain energy:

- Attwell, D. & Laughlin, S.B. (2001). "An energy budget for signaling in the grey matter of the brain." J. Cerebral Blood Flow & Metabolism. Brain power: ~20W.

- Scientific American (2012). "Does Thinking Really Hard Burn More Calories?" Brain: ~12.6W of ~63W resting metabolic rate.

- PNAS (2021). "Brain Power." Confirms 20W budget, 2% body weight / 20% metabolic load.

- Bond University / The Conversation (2023). "How much energy do we expend using our brains?" Brain: ~0.3 kWh/day.

AI vs human carbon emissions (peer-reviewed):

- Tomlinson, B. et al. (2024). "The carbon emissions of writing and illustrating are lower for AI than for humans." Scientific Reports 14, 3732. DOI: 10.1038/s41598-024-54271-x. AI emits 130–1,500x less CO₂ per page of text; 310–2,900x less per image.

Per-task energy comparisons:

- Bilancioni, M. (2024). "Energy Efficiency: AI vs. the Human Brain." Medium. AlphaGo Zero: 0.042 Wh/move vs. human: 0.39 Wh/move. Graduate-level Q&A: human experts ~10 Wh/question vs. AI at fractions of a Wh.

Education and infrastructure energy:

- U.S. Energy Information Administration. "Commercial Buildings Energy Consumption Survey: Education Buildings." ~62,700 BTU/sq ft annually.

- Pontzer, H. et al. (2021). "Daily Energy Expenditure through the Human Life Course." Science. Total human energy expenditure across life stages.

AI energy projections and context:

- International Energy Agency. Data center demand projected to reach ~945 TWh by 2030.

- MIT Technology Review (May 2025). "We did the math on AI's energy footprint." Notes that OpenAI, Anthropic, and Google face criticism for lack of energy transparency.

- Hannah Ritchie (Aug 2025). "What's the carbon footprint of using ChatGPT or Gemini?" Sustainability by Numbers. 10 daily queries = 0.03% of daily electricity use. Gemini energy per query dropped 33x in 12 months.

- Mistral AI (2025). Lifecycle analysis of Large 2 model: 1.14g CO₂e, 0.05L water, 0.2mg Sb eq per page of text (400 tokens).

- Benjamin Todd (2025). "The environment is a terrible reason to avoid ChatGPT." Substack.

- Anthropic stated in its US AI Action Plan response that it works with "cloud providers that prioritize renewable energy and carbon neutrality" and recommended building 50 GW of dedicated AI power capacity by 2027.

Written with ❤️ by a human (still)